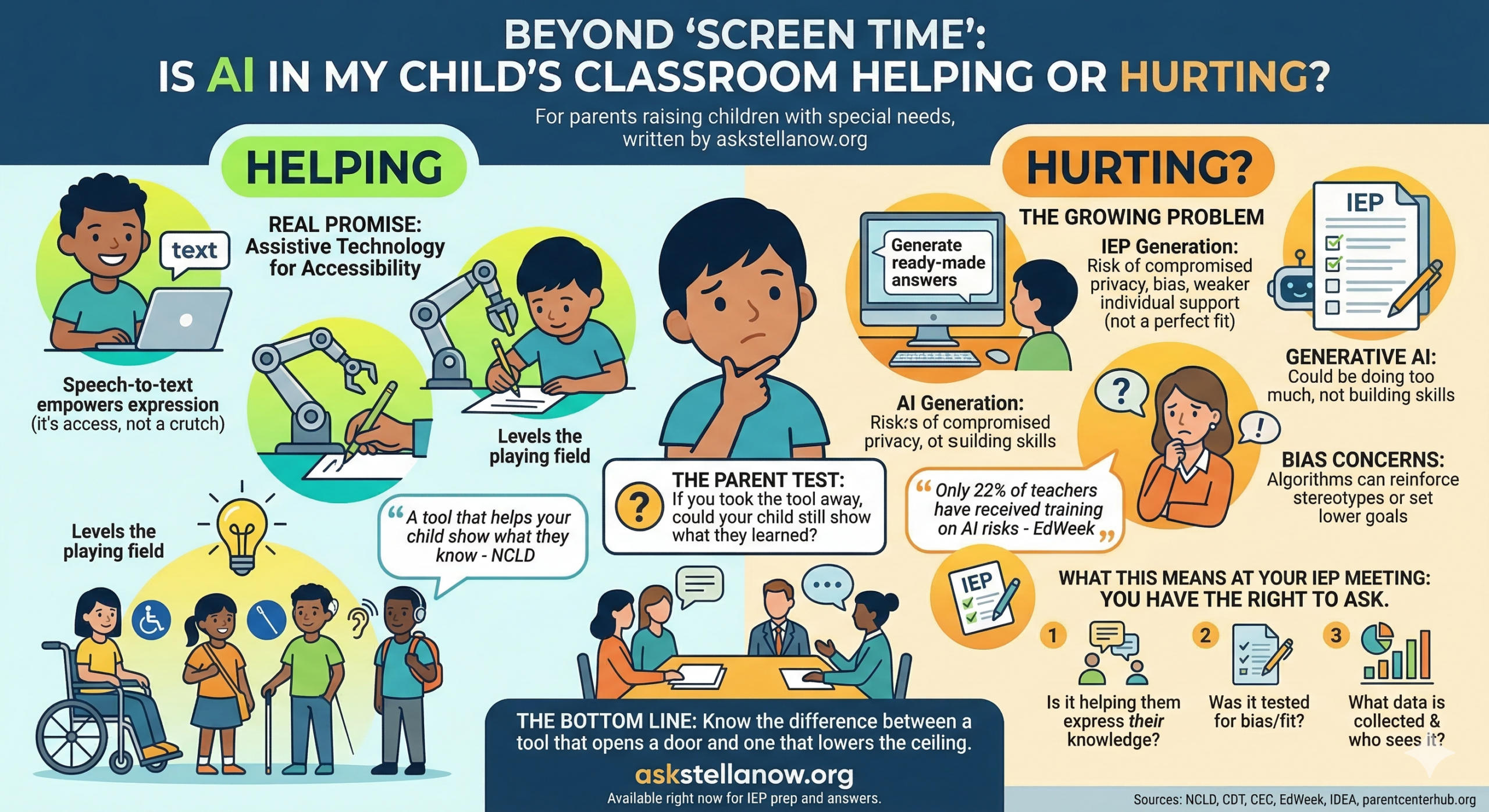

For parents raising children with special needs, written by askstellanow.org

You have probably heard the phrase “screen time” your whole parenting life. Too much TV. Too much iPad. And now, AI is showing up in your child’s classroom, and nobody asked you if you were ready.

Here is what you actually need to know.

Something big is changing right now

Schools across the country are moving fast to bring AI tools into classrooms. Some of those tools are genuinely helpful. Some are not. And the line between the two matters more for your child than for any other student in that building.

People with disabilities have long benefited from technology. For decades, assistive technology has helped students with disabilities learn. AI holds real promise for making lessons more accessible. National Center for Learning Disabilities (c:100)

But there is a problem growing alongside that promise.

A growing number of special education teachers are now using AI to help write and manage IEPs. While many say it saves them time, researchers warn that this could compromise your child’s privacy, reinforce bias, and weaken the individualized supports that federal law requires. Government Technology

In plain English: a tool designed to save a teacher two hours of paperwork could quietly produce an IEP that does not actually fit your child.

The question every parent should be asking

There is a difference between a tool that helps your child show what they know and a tool that does the work for them.

Speech-to-text for a child who struggles to write by hand is access. It opens a door that would otherwise stay closed. The child still has to think. The child still has to know the answer. The tool just removes the physical barrier between their brain and the page.

An AI tool that generates answers for your child is a different thing entirely. It may look like help. It may even raise their grade. But it is not building anything inside your child. And for kids who already face steep learning curves, that distinction matters enormously.

Assistive technology is not about giving a student an unfair advantage. It is about leveling the playing field. If a student cannot physically write, speech-to-text allows them to express their ideas. Leapfrog

One test parents can apply: if you took the tool away, could your child still show you what they learned? If the answer is no, the tool may be doing too much.

The bias problem nobody is telling you about

Here is a piece of this conversation that does not get enough attention.

Biases in AI training data could reinforce stereotypes or produce inequitable recommendations, especially since students with disabilities are often underrepresented in the data sets used to build these tools. Government Technology

That means an AI tool might look at your child’s profile and make assumptions based on what it has seen before, not on who your child actually is. It might suggest lower goals. It might recommend less ambitious supports. It might treat your child as a category rather than a person.

As of early 2025, only 22% of middle and high school teachers surveyed said they had received any training on the risks of AI, including inaccuracy and bias. Education Week The person using the tool your child’s school purchased may have had no training on its limitations at all.

What this means at your next IEP meeting

Under federal law, the need for assistive technology must be discussed at every IEP meeting to determine whether it would enable the student to benefit from their educational program and make progress toward their goals. Peatc

You have the right to ask questions. Here are three that cut through the noise.

Question 1: Is this tool helping my child express what they know, or is it doing the work for them?

Ask the team to show you an example. If your child used a particular AI tool, what did your child produce with it? Could they walk you through their thinking? The tool should be a bridge, not a replacement.

Question 2: Was this AI tool tested for bias, and does it actually fit my child’s specific situation?

Data privacy, algorithmic bias, and accessibility must be front and center in any conversation about implementation. Students with disabilities deserve technologies designed with inclusivity in mind, not as an afterthought. Council for Exceptional Children Ask who selected the tool, what the selection process looked like, and whether anyone reviewed it specifically for kids like yours.

Question 3: What data does this tool collect about my child, and who can see it?

Schools are responsible for compliance with privacy laws like FERPA, which protect sensitive student information including performance data, behavior logs, and recordings. Springer Ask directly: who has access to what this tool collects? How long is that data kept? Can you request it or have it deleted?

The bottom line for your family

AI in schools is not going away. Resisting it entirely is not the answer either, because the right tools, used well, can genuinely change what is possible for your child.

The answer is knowing the difference between a tool that opens a door and one that quietly lowers the ceiling.

You are the expert on your child. The IEP table is the one place in your child’s life where your expertise has legal standing. These three questions are how you use it.

If you want help preparing for an upcoming IEP meeting or figuring out which assistive technology questions apply to your child’s specific situation, Stella is available right now at askstellanow.org. No appointment. No waiting room. Just answers.

Sources: National Center for Learning Disabilities (NCLD), Center for Democracy and Technology (CDT), Council for Exceptional Children (CEC), EdWeek, Individuals with Disabilities Education Act (IDEA), Parent Center Hub (parentcenterhub.org)